AI that lives

inside ServiceNow.

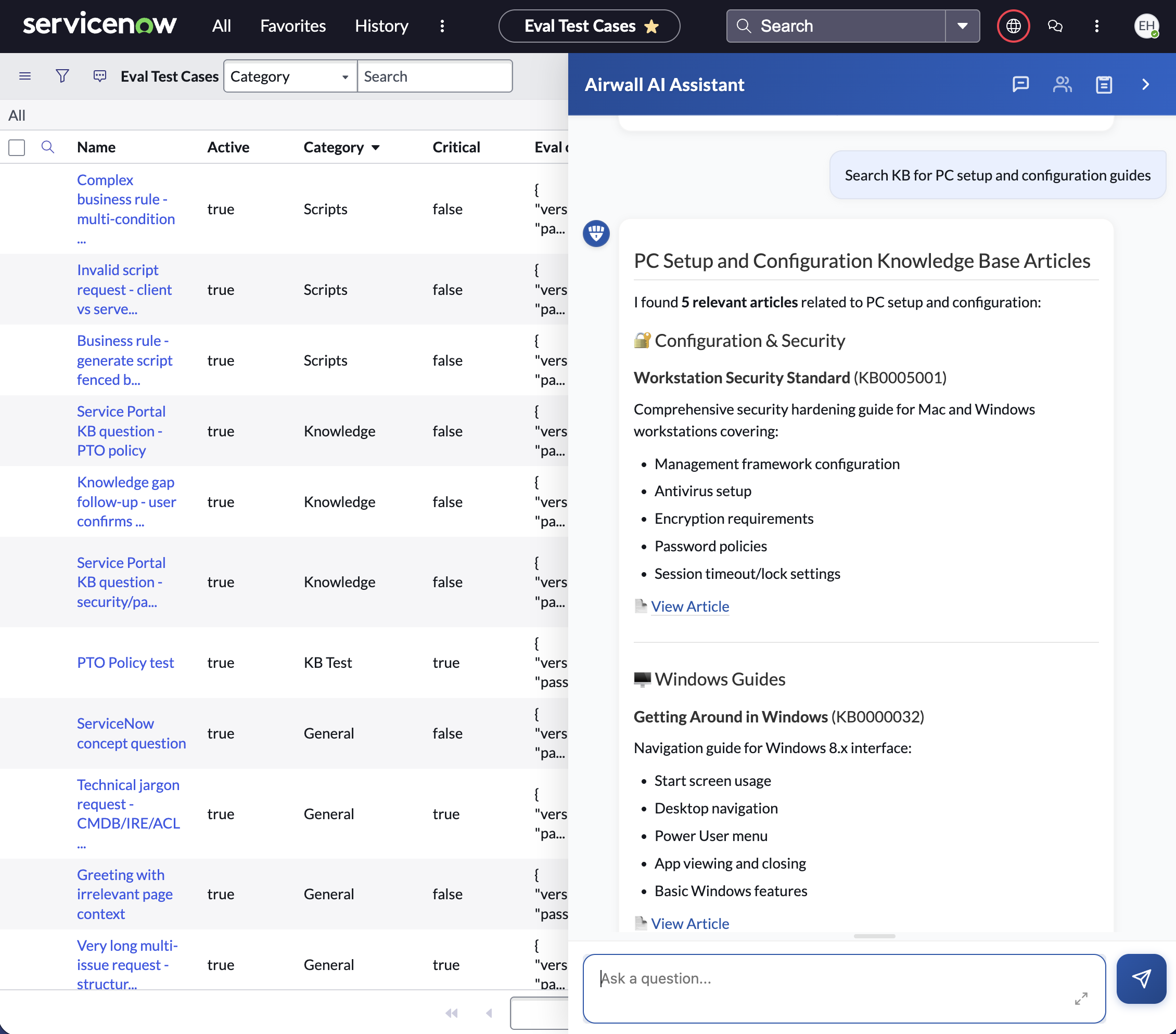

Context-aware on every page, every form, every list. It searches your knowledge base, finds catalog items across every brand, analyzes lists of any size in seconds, drafts business rules on the form, and hands off to a live agent — with full context — when needed.

100%

KB accuracy benchmark

Multi-agent

Specialized pipeline

24

Documented features

Unlimited

Records per analysis

Three headline claims

Use these anywhere.

AI that lives inside ServiceNow.

Native to Classic UI, Service Portal, and Workspaces — no tab switching, no copy-pasting.

100% benchmarked accuracy on knowledge base search.

59-test KB suite, 3 runs, top model hits 100% with FTS on and off.

Unlimited list size in one prompt.

Parallel processing fans out across workers and a finalizer synthesizes a presentation-ready report — no row caps, no token ceilings, tested well past 20,000 rows.

Core capabilities

What it does inside ServiceNow.

ServiceNow AI Assistant

Context-aware, multi-turn, page-aware conversational AI.

Fields & Scripts Assistant

Auto-fills fields, generates Client Scripts, Business Rules, Script Includes, CSS, HTML.

Multi-Strategy KB Search

Multiple semantic strategies run in parallel for high recall.

Catalog Item Discovery

Category browsing + intent expansion (finds items across brands keyword search misses).

Auto-Incident Creation

When KB or catalog misses, files an incident automatically. Closes the loop on documentation gaps.

Parallel List Workflow

Large-scale list analysis via a multi-stage planner / worker / finalizer pipeline.

Benchmarks

100% KB accuracy. Verified.

59 KB tests, 3 runs per configuration, with full-text search (FTS) on and off. Top model hits 100% in both modes.

| Model | FTS On (avg) | FTS Off (avg) |

|---|---|---|

| Claude 4.5 Sonnet | 100.0% | 100.0% |

| Claude 4.6 Sonnet | 96.6% | 96.0% |

| GPT-5.4 | 98.8% | 99.4% |

| GPT-5.2 | 98.9% | 93.2% |

| GPT-4.1 | 97.7% | 83.4% |

| GPT-4o | 85.3% | 30.5% |

Catalog and incident retrieval pipelines hit 100% across every tested model in their respective suites — full methodology on the benchmarks page.

Quantified business impact

Measurable from day one.

40–60%

Faster task completion

~50%

Less time writing scripts

+70%

KB search accuracy vs keyword

Reduced

L1 ticket volume

Live agent handoff with full context.

One-click escalation from AI chat to a human agent via ServiceNow Advanced Work Assignment + Agent Chat. The full AI context is forwarded — what was asked, what was tried, what the AI recommended.

- Built-in live-agent chat queue

- Capacity-aware assignment with presence state

- Pre-built Virtual Agent topics for handoff and transfer

- Graceful fallback — when no agents are available, user stays with AI. No dead ends.

AI that knows when to step aside.

Continuous-improvement loop.

Most AI stops at “Sorry, I don’t know.” Enterprise Agent opens an incident.

BYOLLM

Switch in a config table. No code. No redeployment.

Your customer uses Azure. Their competitor uses AWS. That prospect wants OpenAI. L2H works with all of them.

AWS Bedrock

Frontier and open-source models in one integration

OpenAI

Full frontier lineup with native web search

Anthropic (direct)

Direct API for newest model rollouts

Azure OpenAI

GovCloud-eligible · vision deployments supported

Google Gemini

Native search grounding · multimodal input

xAI Grok

Native live search

Self-hosted / Open-source

Any model via OpenAI- or Anthropic-compatible endpoints

Deployment options

Reseller-ready, every cloud.

AWS

Best for: Standard cloud customers

Infrastructure-as-code supplied

Azure

Best for: Microsoft-aligned customers

Commercial and GovCloud paths supported

Azure GovCloud (DoD/DISA)

Best for: Federal & DoD

Configured for high-assurance environments

Kubernetes

Best for: Enterprises standardizing on K8s

Infrastructure-as-code module included

On-prem

Best for: Air-gapped / regulated

Container image + standard config/secrets pattern

Private routing

Best for: Restricted-network environments

Supported for IL5 / private-endpoint topologies

Fast install path — typical customer is live in under a day.

Audience cuts

Built for every role.

ServiceNow Admins / Devs

- Form field assistance

- Business-rule generation

- Work-note summarization

- Significant script-writing reduction

- Slash-command library

End Users / Self-Service

- Natural-language KB search

- Catalog discovery across brands

- Auto-creates incidents for unmet needs

- L1 ticket deflection

IT Leadership / CIOs

- Multi-LLM (no vendor lock-in)

- Token budgets per role

- Data limits per instance

- Built-in eval/benchmark framework

Resellers / Partners

- One product, every customer, every cloud

- Fast install path

- Protected IP for customer-specific extensions

- Benchmark on customer data before sign

Common objections, answered.

How do we control AI cost?

Token budgets per role + interactive budget modal + per-request usage tracking.

Our security team won't approve.

Scoped roles, row-level ACLs, secret redaction, browser-brokered tool execution, structured error codes — all built in.

We're locked into Azure / AWS.

Every major LLM provider supported — OpenAI, Anthropic Claude, Google Gemini, Meta Llama, Mistral, Cohere, Amazon Nova, xAI Grok, plus any self-hosted model. Swap in a config table. Identical product.

Will it actually work on our data?

Built-in eval/benchmark framework. Test any model on customer data before going live.

We have a Virtual Agent already.

Enterprise Agent complements VA — adds context-aware AI on every page and routes to your existing live-agent infrastructure.

Re-deploy for every change?

Custom system prompt, slash commands, model choice, token budgets — all config-table edits. Effective on next message.

Technical specifications

- ServiceNow surfaces

- Classic UI, Service Portal, Workspaces (Now Experience)

- Backend runtime

- Customer-hosted service with a structured agent framework

- Cloud targets

- AWS, Azure, Azure GovCloud, Kubernetes, on-prem

- Auth (SN ↔ backend)

- Basic, OAuth client credentials, REST API Key, private routing

- Max list size

- Unlimited (tested well past 20,000 rows)

- Token budgets

- Fully configurable per role, including unlimited

- Data caps

- Fully configurable per instance

- Live agent stack

- Advanced Work Assignment, Agent Chat, Conversational Interfaces

- Observability

- Cloud and container logs with structured error codes, request IDs, token tracking

Enterprise Agent for ServiceNow.

Deploy it today.

Fast install path. First chat in under a day for a typical customer.

Use a different platform? See Enterprise Agent for VS Code or the Enterprise Agent overview (custom platforms on request).

The rest of the stack